Zero-downtime deployments are a baseline expectation for any production Rails application. Adding database indexes without locking your tables is a key part of that — but the way Rails handles this pattern hides a subtle failure mode that can leave your database in an inconsistent state and force manual intervention at the worst possible time.

The Problem With Blocking Index Creation

When you integrate strong_migrations into a Rails project, one of the first warnings you'll encounter is this:

Adding an index non-concurrently blocks writes.

On a large table, a standard add_index can lock writes for several seconds — or much longer if the table is big enough. In production, that means downtime. The fix is straightforward: use PostgreSQL's CREATE INDEX CONCURRENTLY, exposed in Rails as algorithm: :concurrently.

class AddIndexToUsersEmail < ActiveRecord::Migration[7.0]

disable_ddl_transaction!

def change

add_index :users, :email, algorithm: :concurrently

end

endThe disable_ddl_transaction! call is mandatory here. By default, Rails wraps every migration in a transaction — which is a safety net: if anything goes wrong mid-migration, everything rolls back automatically. But PostgreSQL explicitly prohibits running CREATE INDEX CONCURRENTLY inside a transaction block. So we opt out.

This is a well-known pattern. What's less discussed is what happens when things go wrong inside a migration that has opted out of transactions.

The Hidden Danger: Partial Failures With No Rollback

Imagine you have multiple operations to perform on the users table:

class AddIndicesToUsersEmailAndPhoneNumber < ActiveRecord::Migration[7.0]

disable_ddl_transaction!

def change

add_index :users, :email, algorithm: :concurrently

raise "something goes wrong here"

add_column :users, :phone_number

end

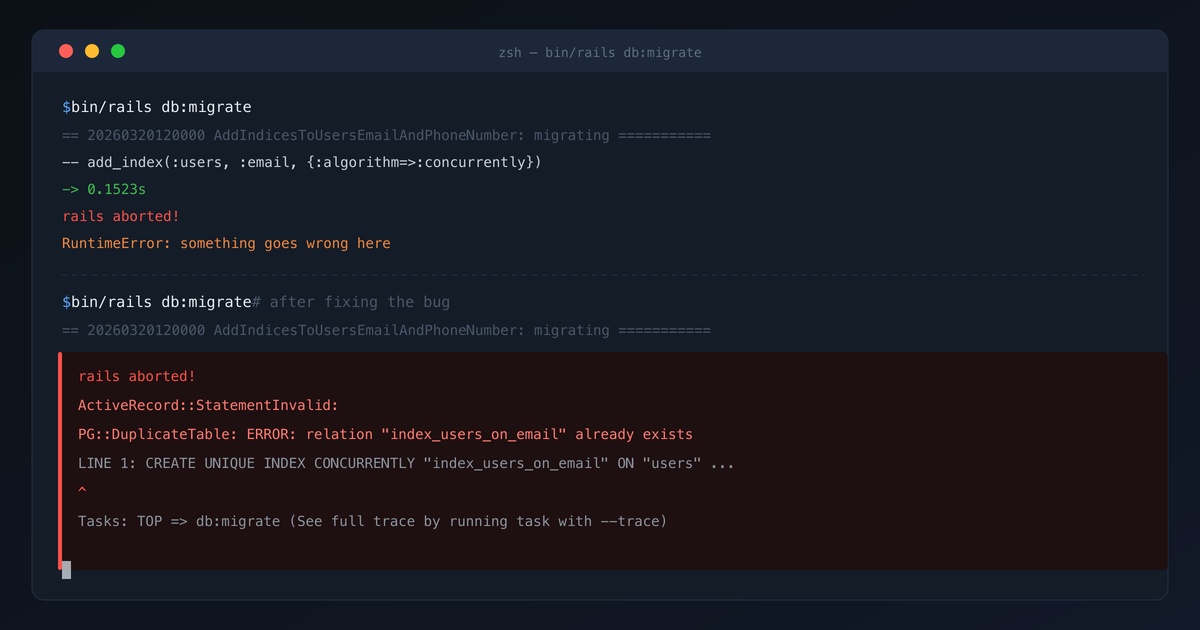

endThe migration fails on the second statement. So far, so expected. You fix the issue and run db:migrate again.

This time, you get a different error entirely:

PG::DuplicateTable: ERROR: relation "index_users_on_email" already existsBecause there is no wrapping transaction, the first add_index was committed immediately when it ran. When the migration failed, there was nothing to roll back. The index now exists in the database, but Rails still considers the migration as pending — so on the next run, it tries to create the same index again, and PostgreSQL refuses.

The only path forward is to manually drop the orphaned index directly on the database. In a staging environment, this is an inconvenience. In production, it's a serious operational risk.

The Fix: One Statement Per Disabled-Transaction Migration

The rule is simple: each migration using disable_ddl_transaction! should contain exactly one statement. If you need to perform two operations, write two migrations.

# Migration 1

class AddIndexToUsersEmail < ActiveRecord::Migration[7.0]

disable_ddl_transaction!

def change

add_index :users, :email, algorithm: :concurrently

end

end

# Migration 2

class AddPhoneNumberToUsers < ActiveRecord::Migration[7.0]

def change

add_column :users, :phone_number

end

endThis ensures that any failure is fully isolated. Each migration is either completely applied or not applied at all — giving you back the safety guarantee that transactions normally provide.

Enforcing It Automatically With a Custom RuboCop Cop

Good principles are best enforced at the tooling level, not through code review comments. The full implementation is available as a GitHub Gist — a custom RuboCop cop that flags any disable_ddl_transaction! migration whose change, up, or down method contains more than one statement.

Drop it into your custom_cops/ directory, wire it up in .rubocop.yml, and your CI pipeline will catch violations before they ever reach production.

The Takeaway

disable_ddl_transaction! is not just a PostgreSQL formality — it fundamentally changes the failure semantics of your migration. Once you opt out of transactions, you lose automatic rollback, and every statement becomes permanent the moment it executes. Keep these migrations to a single statement, enforce it with automation, and you'll never find yourself manually cleaning up orphaned indexes in a production database.